What Is It?

The SaaS Kick Start Template is a production-ready scaffold that wires Retrieval-Augmented Generation directly into your Rails application — no glue code required. It ships with a vector store adapter layer, an embedding pipeline backed by OpenAI or Ollama, and a clean query interface you can drop into any controller or background job.

Built from real-world experience integrating LLMs into healthcare and SaaS backends, this kit is designed to be boring in the best possible way: plain Ruby objects, standard Rails conventions, and zero magic that breaks when you upgrade.

Stop wrestling with Python microservices. RAG belongs in your Rails monolith.

Who Is It For?

This kit is aimed squarely at Rails engineers who:

- Want to add semantic search or document Q&A to an existing app without spinning up a separate Python service

- Need to embed internal knowledge bases, support docs, or structured records into an LLM context window

- Are evaluating RAG approaches and want a reference implementation that follows Rails idioms

- Have tried LangChain or LlamaIndex and found them overpowered for a focused Rails use case

What’s Included

1. Vector Store Adapter

A thin adapter layer over pgvector (PostgreSQL extension) so your embeddings

live right alongside your existing data. Swap to Pinecone or Qdrant by changing a single

initialiser line — no model changes required.

2. Embedding Pipeline

A Rag::EmbeddingJob Active Job class that chunks, cleans, and embeds your

documents on creation or update. Supports text-embedding-3-small (OpenAI) and

nomic-embed-text (Ollama) out of the box.

# Embed a document on creation

class Article < ApplicationRecord

after_save :embed_content, if: :saved_change_to_body?

private

def embed_content

Rag::EmbeddingJob.perform_later(self)

end

end

3. Query Interface

A Rag::Query service object that takes a natural-language question, retrieves

the top-k relevant chunks via cosine similarity, assembles a context window, and

streams a completion back from the LLM of your choice.

result = Rag::Query.ask(

question: "What is our refund policy?",

scope: Article.published,

top_k: 5

)

puts result.answer # LLM-generated response

puts result.sources # Array of matched Article records

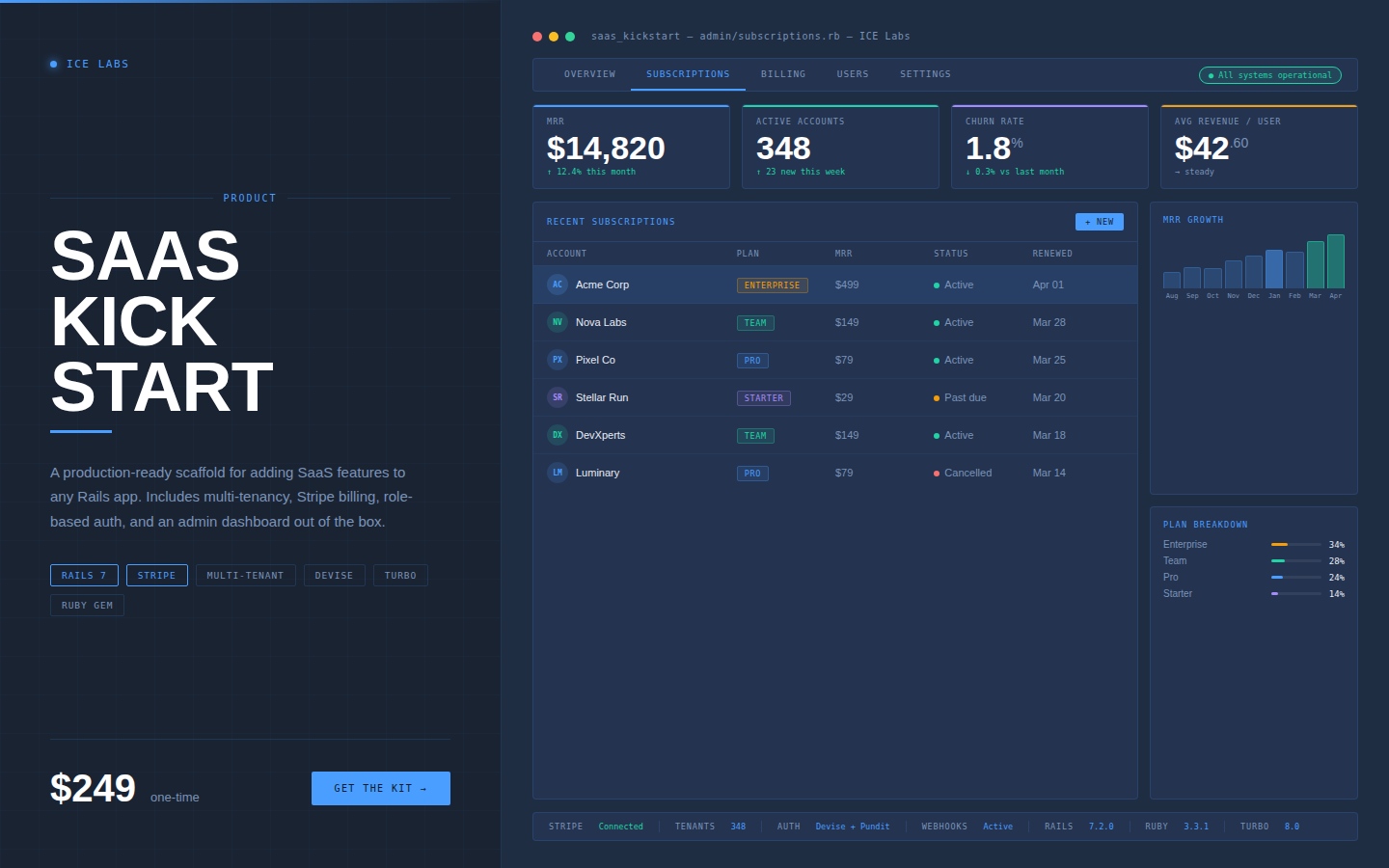

4. Rails Engine & Mountable Dashboard

Mount the included engine to get a read-only dashboard showing embedded document counts, query logs, latency metrics, and embedding coverage — useful during development and QA.

# config/routes.rb

mount Rag::Engine, at: "/rag/dashboard"

Quick Start

Installation

# Gemfile

gem "rag-rails"

bundle install

rails rag:install

rails db:migrate

Configuration

# config/initializers/rag.rb

Rag.configure do |config|

config.embedding_provider = :openai # or :ollama

config.vector_store = :pgvector # or :pinecone, :qdrant

config.openai_api_key = ENV["OPENAI_API_KEY"]

config.chunk_size = 512 # tokens per chunk

config.chunk_overlap = 64

end

Compatibility

| Dependency | Version | Notes |

|---|---|---|

| Ruby | 3.1+ | Tested on 3.1, 3.2, 3.3 |

| Rails | 7.0+ | Including Rails 8 |

| PostgreSQL | 14+ | Requires pgvector extension |

| OpenAI API | — | Optional — Ollama supported |

Roadmap

- Multi-modal embeddings (image + text)

- Streaming responses via Hotwire Turbo Streams

- Hybrid BM25 + vector search

- LangGraph-style agent loop integration

License

Released under the MIT License. Free to use in commercial and open-source projects. Contributions welcome via GitHub pull requests.